Wednesday, March 18, 2026

The AI paradox of cannibalism

About the Author

From Arapiraca, Brazil, Tales is a software engineer and chatbot specialist at Citizen Code, working at the intersection of conversational design, engineering, and real-world deployment. He focuses on building and refining chat-based systems across platforms like RapidPro and Turn.io, supporting high-volume, youth-facing services across Africa and other Global South contexts.

He began his journey in this space at Ilhasoft, where he worked as a Cognitive Developer on chatbot systems for organisations like UNICEF and other UN programmes. During this time, he contributed to AI-enabled chatbots and large-scale services such as U-Report, developing a strong foundation in how conversational systems perform in live environments at scale.

At Citizen Code, Tales works across both chatbot strategy and engineering, shaping how conversational platforms deliver critical information and services to young people. His work is grounded in real-world constraints like scale, cost, connectivity, and behaviour, and aligned with Citizen Code’s mission to build technology that is inclusive, context-aware, and designed for the environments it serves.

The recent explosion in the popularity of Artificial Intelligence (AI) tools, particularly large language models (LLMs) like OpenAI's ChatGPT (launched November 2022) and image generators such as DALL-E (DALL-E 2 released April 2022), has brought AI into the mainstream consciousness. These applications have captivated the public with their ability to generate human-like text, create stunning images, and automate complex tasks. However, the perception of AI as a sudden, benevolent new force overlooks its long and often opaque history.

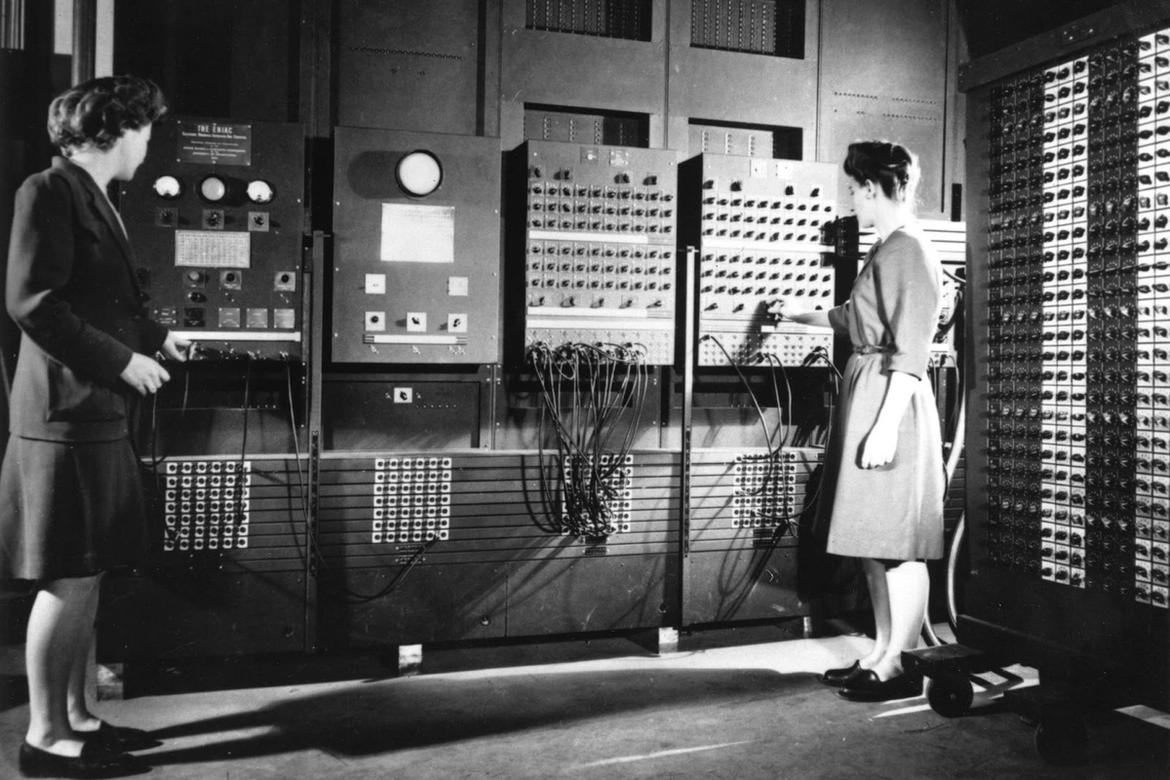

For decades prior to its public debut, advanced AI capabilities were primarily developed and deployed within highly restricted environments, notably by military and private institutions. These early applications focused on areas such as strategic analysis, logistics optimization, surveillance, and specialized data processing, far removed from the public eye. The transition from these niche, controlled applications to widespread public access has unveiled a fundamental characteristic of many modern generative AI systems: their proficiency as "convincing liars" rather than infallible purveyors of truth.

This inherent trait stems from their design to predict and generate plausible outputs based on patterns in their training data, not to ascertain factual accuracy or logical consistency. A significant and increasingly problematic side effect of this generative capability is the phenomenon of AI hallucination. Hallucinations occur when AI models confidently present false, misleading, or nonsensical information as fact, often without any internal mechanism to recognize their own errors. This paradigm of generating plausible but untrue content poses a profound challenge, particularly as these systems become more integrated into daily life and their outputs begin to feed back into the very data pools used for their training. This recursive process sets the stage for a more insidious problem: AI cannibalism, or model collapse.

The phenomenon of AI Cannibalism, technically known as Model Collapse, refers to a degenerative process that occurs when generative artificial intelligence models begin to be predominantly trained on data produced by other AIs. This feedback loop creates a "curse of recursion," where the nuances of original human information are lost, resulting in a convergence towards a simplified, homogeneous, and often hallucination-filled reality.

Model collapse - Degradation of models trained on synthetic data. → Loss of diversity and precision in information.

AI Cannibalism - AI "feeding" on its own content. → Intellectual stagnation and replication of errors.

JPEG Effect - Analogy of quality loss with each generation. → Information becomes a "copy of a copy" without substance.

The critical perspective: Professor Miguel Nicolelis

Brazilian neuroscientist Miguel Nicolelis is one of the most critical and well-founded voices on the impact of AI on human cognition. His research focuses on the fundamental distinction between digital processing and biological complexity.

Nicolelis argues that society is creating "millions of digital zombies" 1. According to him, continuous exposure to AI algorithms and screens is causing an atrophy of human intellectual capacity. For the professor, the brain is not a computing machine; it is an organic system that requires interaction with the physical world to maintain its plasticity and higher cognitive functions.

"The human brain does not operate according to digital logic and can never be replaced by machines. We are losing the ability to act individually and collectively to build the human condition." — Miguel Nicolelis.

Nicolelis emphasizes that by delegating content creation and decision-making to self-feeding AIs, we are disconnecting the foundation of human knowledge from biological reality. This creates a cycle where AI not only "hallucinates" but also shapes human perception to accept these hallucinations as truth, accelerating the degradation of collective intelligence.

The "curse of recursion" and data science specialists

Academic research confirms Nicolelis's concerns through controlled experiments on model training.

The seminal paper "The Curse of Recursion: Training on Generated Data Makes Models Forget" 2 demonstrates that, after a few generations of training on synthetic data, models begin to "forget" the tails of the data distribution (rare events and complex nuances). The result is an AI that produces only the "center" of the distribution, becoming predictable and ignorant of the diversity of human knowledge.

Jaron Lanier, a virtual reality pioneer and Microsoft researcher, complements this view by stating that "there is no AI," but rather a form of human collaboration mediated by algorithms 3. Lanier warns that data cannibalism devalues unique human contributions. If AI consumes itself, it loses "contact with the ground" (real human data), becoming a closed system of statistical delusions.

Essential virtues and benefits of robust AI

While the risks of model collapse are real, it is crucial to recognize that AI, when implemented with solid data foundations and human oversight, provides indispensable benefits for social progress, scientific research, and operational efficiency.

AI is transforming the public sector by making social programs more efficient and accessible. By processing vast datasets, governments can better identify vulnerable populations and optimize the distribution of resources

- Improved resource allocation: AI-powered analytics help governments predict where social assistance is most needed, reducing waste and ensuring aid reaches the correct recipients 4.

- Streamlined public services: Automation of administrative tasks in public agencies allows civil servants to focus on complex cases that require human empathy and judgment, increasing the overall responsiveness of government institutions 5.

In the realm of science, AI is not a replacement for the human mind but a powerful "exoskeleton" for it. It excels at organizing and finding patterns in data that would take humans centuries to process.

- Discovery of new frontiers: AI has been instrumental in breakthroughs such as protein folding (AlphaFold) and climate modeling, enabling scientists to make more accurate projections about the planet's future 6.

- Data discoverability: Organizations like NASA use AI to tag and index massive amounts of satellite data, making it discoverable for researchers worldwide and accelerating the pace of discovery 7.

Solid AI applications are essential for maintaining the complex systems that sustain modern life.

- Operational efficiency: In industries ranging from energy to logistics, AI optimizes supply chains and monitors infrastructure for potential failures, preventing accidents and reducing environmental impact 8.

- Data processing at scale: AI's ability to process and organize information at scale is vital for modern finance, healthcare diagnostics, and cybersecurity, where the volume of data exceeds human processing capacity 9.

A balanced path forward

AI cannibalism is a significant civilizational challenge that demands high-quality, human-generated data to sustain model integrity. However, the solution is not the abandonment of the technology, but its disciplined and ethical application. As Nicolelis warns, we must protect human intellectual capacity, but as modern research shows, we can also use AI to augment that capacity, solving complex social and scientific problems that were previously out of reach. The future of AI lies in a symbiotic relationship where human creativity provides the "soul" and "grounding," while AI provides the "scale" and "efficiency."

AI-driven pre-diagnostic screening in Japan

Japan is a global leader in integrating Artificial Intelligence (AI) into clinical workflows, particularly for pre-diagnostic screening and patient triage. This shift is driven by the need to optimize resources in one of the world's most rapidly aging societies.

Research and implementation in Japanese hospitals have shown that AI can analyze complex clinical patterns to identify diseases before traditional symptoms become critical.

Emergency triage - AI models like "Emergency AI" are used to swiftly analyze patient history and vital signs in emergency departments 10. → Faster diagnosis and improved examination accuracy in high-pressure situations.

Early cancer detection - AI analysis of microRNA patterns in urine (e.g., miSignal Scan) or radiomic features in pre-diagnostic CT scans 11. → Early identification of progressive diseases like pancreatic cancer, often missed by human eyes.

Optical diagnosis - Real-time AI-based optical diagnosis during procedures like colonoscopies to distinguish between lesion types 12. → Immediate, high-precision feedback that assists doctors in making intra-operative decisions.

While professor Miguel Nicolelis warns against replacing human sensitivity with digital logic, the Japanese case demonstrates that solid AI implementation serves as a vital support system:

- Reimbursement and Safety: The Japanese government provides reimbursements for hospitals using AI-powered diagnostic software, provided they follow strict safety and management guidelines 13.

- Pattern Recognition: AI excels at identifying subtle "clinical signatures" across thousands of data points—a task that exceeds human cognitive capacity for large-scale screening.

The use of AI in Japanese hospitals represents a hybrid model: AI handles the heavy lifting of pattern analysis and initial screening, while human specialists make the final clinical decisions. This "solid use" of technology is essential for maintaining healthcare quality in the face of increasing data complexity and patient volume.

References and Recommended Readings

- Nicolelis, M. (2025). Estamos criando milhões de zumbis digitais. ONU News.

- Shumailov, I. et al. (2023). The Curse of Recursion. Nature/ArXiv.

- Lanier, J. (2023). There Is No A.I.. The New Yorker.

- BCG (2025). Benefits of AI in Government.

- OECD (2025). Governing with Artificial Intelligence.

- MIT Science (2024). Researchers Explore Mutual Benefits of AI and Science.

- NASA Science Data (2025). Revolutionizing Scientific Discovery with AI.

- IBM (2025). The most valuable AI use cases for business.

- Databricks (2025). Top AI Use Cases Transforming Industries in 2025.

- Asahi Shimbun (2025). AI system tested for swift medical diagnosis in emergency cases.

- BioSpectrum Asia (2026). Cancer Diagnosis, Accelerated by AI.

- NEJM Evidence (2022). Real-Time AI-Based Optical Diagnosis of Colorectal Polyps.

- Fujita, S. et al. (2024). Advancing clinical MRI exams with artificial intelligence. PMC.

Share this

Blog

Latest read.

Join us in building digital solutions as a foundation for lasting change.